This course has finally come to an end, which is both a good, and bad thing. I definitely learned a lot, however there was many times throughout this course where I found it very boring. I think some parts were not interesting because it took me a long time to figure different concepts out, which was extremely annoying, and frustrating. Hopefully, if I am able to pass the course one thing I hope to do next year, is actually attend office hours whenever I am confused. Most of the time in this course I just waited until the end of the week to just review myself, which didn't always work out, because I usually ended up getting more frustrated with some of the concepts. Throughout the course I definitely felt weaker than most of the other students, because many of them seemed to have some past experience with some of the topics.

We ended this course with the concept Induction, which apparently is emphasized on a lot next year, so its important that I understand now rather than waiting to look at it later. Like most of the things I learned it took me a while to just get a basic and small understanding of the concept. I still don't have enough knowledge to actually write about it, or give my own insight on the concept. This week we also had our last assignment. When I first looked at it, I knew I would be able to solve the first two with a little time. 3, and 4 I knew how to approach the question but I didn't understand how to implement the definition of a limit to the proof, or disproof. After going through Piazza, course notes, and few other slogs ( http://celinasopiniononcsc165.blogspot.ca/) I had an idea of how to approach the problem. My partner also told me that all exponential functions were greater that polynomials which made my work way easier. I had difficulties understanding the last two because one I have trouble with halting, and two I understand the basics of proving general properties, and I am still not that strong in disproving general properties of Big O, Omega, and Theta. I think for the Final Exam the most important concepts I really need to focus on is Complexity of Algorithms, Counting steps, Halting, Proving using contradiction, proving of general properties, using Venn diagrams for some of the earlier concepts, and proving limits. I found one of the posts very helpful on one of the students slog (http://courselogcsc165.blogspot.ca/). The student explains the point of complexity and what we should consider when we determine it.

Overall if there was one concept which I felt pretty comfortable with, it would have to be proving Big Oh, Omega, and Theta. I have gone through few problems throughout all my slogs, but I mainly focused on the issues I had with some of the concepts.

Problem Solving Episode

For this slog I have decided to use Polya's problem solving technique to show a big Omega proof involving general properties. This is a very simple one, because I'm still practicing some more difficult ones for the exam.

Proof:

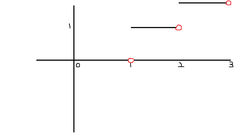

∀f : N -> R^(>=0) , ∀g : N -> R^(>=0) , g belongs to (big omega) (f) --> g^2 belongs to (big omega) (f^2)

Understand the Problem:

This proof is basically saying that for all functions if a function f is lower than function g, then it implies that the same function f squared is lower than the same function g squared.

Devising a plan:

The reason why I'm putting the definition, and proof in partly words, is because sometimes when its in English its more easier to understand. This is what helped me understand the problem, going straight into the symbols sometimes confuses me.

1. We have to first know what the definition of big omega is

∃ c belongs to R+, ∃ B belongs to N, ∀n belongs to N, n >= B --> f(n) >= c * g(n)

Basically the definition is saying that after a specific break point B, there exists a c that makes the function g(n) smaller than f(n)

2. You would have to use this definition within the proof, and the main goal to find a c and a B, but this should be done after the proof is done

3. It is very important to structure the proof before you do all the actually proving

4. Remember that f and g are both functions

Carrying out the plan:

Assume that f, and g are functions

Assume the g belongs to (big omega) f # antecedent

Then write in the definition of big omega

Pick a c = which belongs to R^(>=0) don't actually pick a c until the proof is done

Pick a B = which belongs to N, again don't pick until your done the proof

Assume the antecedent of the big omega proof

Then write in the consequent so we can simplify the functions in order to solve for the c and B, you should use different subscripts to distinguish the antecedent from the consq.

we know that n >= B subscript a which is the B from the antecedent

assuming previous knowledge on algebra we know that c (subscript b so we can show the c were using to show that the consq. is true) * (f^2(n)) is the same as c(subscript b) *( f(n) * f(n))

c(subscript B) * (f(n) * f(n) we know is equal to c(sub B) f(n) * c(sub B) f(n)

If you dont think this is true you can always try some examples choose the same c, and try it with a function, basically what the above is saying is for every g(n) from (g^(n) = g(n) * g(n)) there is one c(sub B) * f(n)

After this you can just multiply and get c^2(sub b) = f^2(n) which is also the same as (f * f ) (n)

Then you just conclude with the original proof given

****** I really wanted to just use words, hopefully people who find this type of proof difficult will understand now, but I'm assuming everyone should know how to do this. This is one of the first proofs I actually figured out myself during the lectures, and tutorial so funny enough it is a big accomplishment

Reviewing, and Looking Back at proof

Hopefully I didn't make a mistake in explaining this proof, I think the most important part for proofs like this is structuring, knowing the definition of for instance, the big oh, omega etc. and also knowing some different algebraic techniques. One thing that made it difficult was the fact that we didn't actually give a value to c. From before proofs we actually gave a number, constant in this case, but after you realize its just a general form, its not that difficult to understand.

Quote for the day

" Study hard for final exams, and try your best, the effort is all that counts "

Some slogs that I found very helpful

http://csc165.peet.io/ - For the coin problem he implemented some code, which I thought was very cool

http://logic-station.blogspot.ca/ - Simple and understandable explanation of halting, and counting steps of complex algorithms

We ended this course with the concept Induction, which apparently is emphasized on a lot next year, so its important that I understand now rather than waiting to look at it later. Like most of the things I learned it took me a while to just get a basic and small understanding of the concept. I still don't have enough knowledge to actually write about it, or give my own insight on the concept. This week we also had our last assignment. When I first looked at it, I knew I would be able to solve the first two with a little time. 3, and 4 I knew how to approach the question but I didn't understand how to implement the definition of a limit to the proof, or disproof. After going through Piazza, course notes, and few other slogs ( http://celinasopiniononcsc165.blogspot.ca/) I had an idea of how to approach the problem. My partner also told me that all exponential functions were greater that polynomials which made my work way easier. I had difficulties understanding the last two because one I have trouble with halting, and two I understand the basics of proving general properties, and I am still not that strong in disproving general properties of Big O, Omega, and Theta. I think for the Final Exam the most important concepts I really need to focus on is Complexity of Algorithms, Counting steps, Halting, Proving using contradiction, proving of general properties, using Venn diagrams for some of the earlier concepts, and proving limits. I found one of the posts very helpful on one of the students slog (http://courselogcsc165.blogspot.ca/). The student explains the point of complexity and what we should consider when we determine it.

Overall if there was one concept which I felt pretty comfortable with, it would have to be proving Big Oh, Omega, and Theta. I have gone through few problems throughout all my slogs, but I mainly focused on the issues I had with some of the concepts.

Problem Solving Episode

For this slog I have decided to use Polya's problem solving technique to show a big Omega proof involving general properties. This is a very simple one, because I'm still practicing some more difficult ones for the exam.

Proof:

∀f : N -> R^(>=0) , ∀g : N -> R^(>=0) , g belongs to (big omega) (f) --> g^2 belongs to (big omega) (f^2)

Understand the Problem:

This proof is basically saying that for all functions if a function f is lower than function g, then it implies that the same function f squared is lower than the same function g squared.

Devising a plan:

The reason why I'm putting the definition, and proof in partly words, is because sometimes when its in English its more easier to understand. This is what helped me understand the problem, going straight into the symbols sometimes confuses me.

1. We have to first know what the definition of big omega is

∃ c belongs to R+, ∃ B belongs to N, ∀n belongs to N, n >= B --> f(n) >= c * g(n)

Basically the definition is saying that after a specific break point B, there exists a c that makes the function g(n) smaller than f(n)

2. You would have to use this definition within the proof, and the main goal to find a c and a B, but this should be done after the proof is done

3. It is very important to structure the proof before you do all the actually proving

4. Remember that f and g are both functions

Carrying out the plan:

Assume that f, and g are functions

Assume the g belongs to (big omega) f # antecedent

Then write in the definition of big omega

Pick a c = which belongs to R^(>=0) don't actually pick a c until the proof is done

Pick a B = which belongs to N, again don't pick until your done the proof

Assume the antecedent of the big omega proof

Then write in the consequent so we can simplify the functions in order to solve for the c and B, you should use different subscripts to distinguish the antecedent from the consq.

we know that n >= B subscript a which is the B from the antecedent

assuming previous knowledge on algebra we know that c (subscript b so we can show the c were using to show that the consq. is true) * (f^2(n)) is the same as c(subscript b) *( f(n) * f(n))

c(subscript B) * (f(n) * f(n) we know is equal to c(sub B) f(n) * c(sub B) f(n)

If you dont think this is true you can always try some examples choose the same c, and try it with a function, basically what the above is saying is for every g(n) from (g^(n) = g(n) * g(n)) there is one c(sub B) * f(n)

After this you can just multiply and get c^2(sub b) = f^2(n) which is also the same as (f * f ) (n)

Then you just conclude with the original proof given

****** I really wanted to just use words, hopefully people who find this type of proof difficult will understand now, but I'm assuming everyone should know how to do this. This is one of the first proofs I actually figured out myself during the lectures, and tutorial so funny enough it is a big accomplishment

Reviewing, and Looking Back at proof

Hopefully I didn't make a mistake in explaining this proof, I think the most important part for proofs like this is structuring, knowing the definition of for instance, the big oh, omega etc. and also knowing some different algebraic techniques. One thing that made it difficult was the fact that we didn't actually give a value to c. From before proofs we actually gave a number, constant in this case, but after you realize its just a general form, its not that difficult to understand.

Quote for the day

" Study hard for final exams, and try your best, the effort is all that counts "

Some slogs that I found very helpful

http://csc165.peet.io/ - For the coin problem he implemented some code, which I thought was very cool

http://logic-station.blogspot.ca/ - Simple and understandable explanation of halting, and counting steps of complex algorithms